Critical RCE vulnerability in LiteLLM Proxy

We independently discovered and chained two vulnerabilities in LiteLLM Proxy into unauthenticated RCE. Upgrade to v1.83.7-stable.

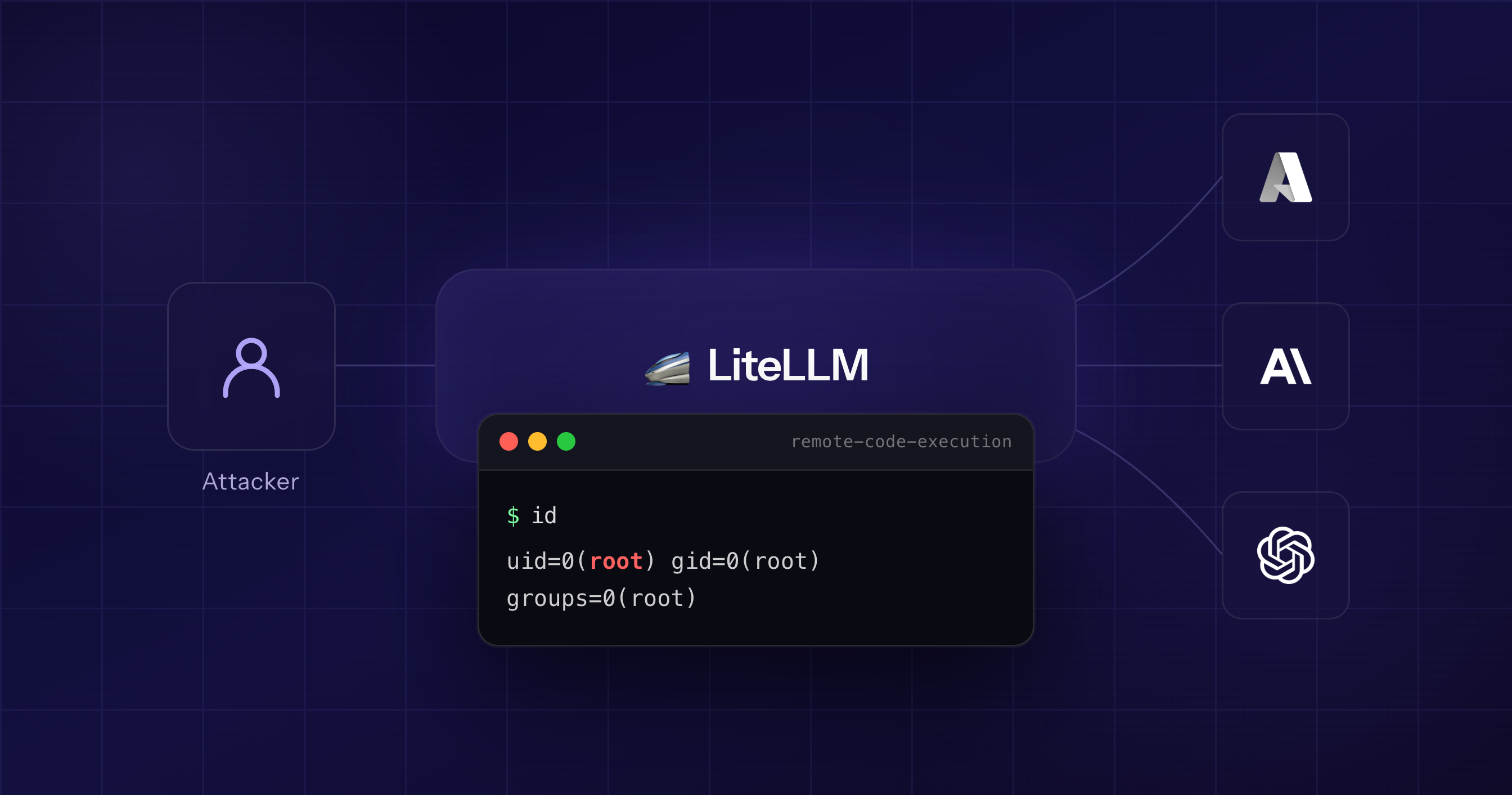

AISafe independently discovered a chain of vulnerabilities in LiteLLM Proxy that allows an unauthenticated attacker to achieve remote code execution on a default deployment.

The maintainers have since published advisories and a patched release. Since the advisories are public on GitHub for anyone to see, we advise all LiteLLM Proxy users to upgrade to v1.83.7-stable immediately.

What is LiteLLM?

LiteLLM is an open-source proxy that unifies 100+ LLM providers behind a single OpenAI-compatible API. It handles API key management, spend tracking, rate limiting, and model routing. With over 40,000 GitHub stars, it is widely used as a gateway between applications and providers like OpenAI, Anthropic, and Azure.

It is also a target in Pwn2Own Berlin 2026 under the AI category, where a successful exploit in this class would net $40,000.

The vulnerabilities

We chained two separately-patched vulnerabilities into a single unauthenticated RCE. Both are now public on LiteLLM's security advisories page:

- GHSA-r75f-5x8p-qvmc (Critical)

- GHSA-xqmj-j6mv-4862 (High)

Together, they give an unauthenticated attacker uid=0(root) on the LiteLLM container. We have a working exploit and will release the technical details in a separate post.

3b711199572bb7f1dcbe608571dd09ed4229acaff777d54392fd4dfe9145405d exploit.py

Who is affected

Any LiteLLM Proxy deployment that:

- Runs a version between 1.81.16 and 1.83.6

- Uses PostgreSQL as the database backend

Remediation

Upgrade to v1.83.7-stable or later.

If you cannot upgrade immediately:

- Set

disable_error_logs: trueundergeneral_settingsin your LiteLLM config. - Block

POST /prompts/testat your reverse proxy or API gateway. - Restrict network access to your LiteLLM Proxy instance. It should not be exposed to the public internet without an authentication layer in front of it.

Reproducible environment

We tested the exploit against a clean deployment using this docker-compose setup with randomized credentials in a .env file:

services: postgres: image: postgres:17 restart: unless-stopped environment: POSTGRES_DB: ${POSTGRES_DB} POSTGRES_USER: ${POSTGRES_USER} POSTGRES_PASSWORD: ${POSTGRES_PASSWORD} volumes: - pgdata:/var/lib/postgresql/data healthcheck: test: ["CMD-SHELL", "pg_isready -U ${POSTGRES_USER} -d ${POSTGRES_DB}"] interval: 2s timeout: 5s retries: 15 litellm: image: ghcr.io/berriai/litellm:main-v1.83.3-stable restart: unless-stopped depends_on: postgres: condition: service_healthy ports: - "4000:4000" environment: DATABASE_URL: postgresql://${POSTGRES_USER}:${POSTGRES_PASSWORD}@postgres:5432/${POSTGRES_DB} LITELLM_MASTER_KEY: ${LITELLM_MASTER_KEY} UI_USERNAME: ${UI_USERNAME} UI_PASSWORD: ${UI_PASSWORD} STORE_MODEL_IN_DB: "True" volumes: - ./config.yaml:/app/config.yaml command: ["--config", "/app/config.yaml", "--port", "4000"] volumes: pgdata:

* The vulnerable code path only becomes active after the server has seen a minimum amount of legitimate interaction. We will cover why that is the case in the technical writeup.

About AISafe Labs

We are a small security startup from Romania and Czechia building affordable, automated security tooling. Our platform found these vulnerabilities as part of its standard scanning pipeline, not through manual review.

If you want this kind of coverage for your own codebase, try AISafe.